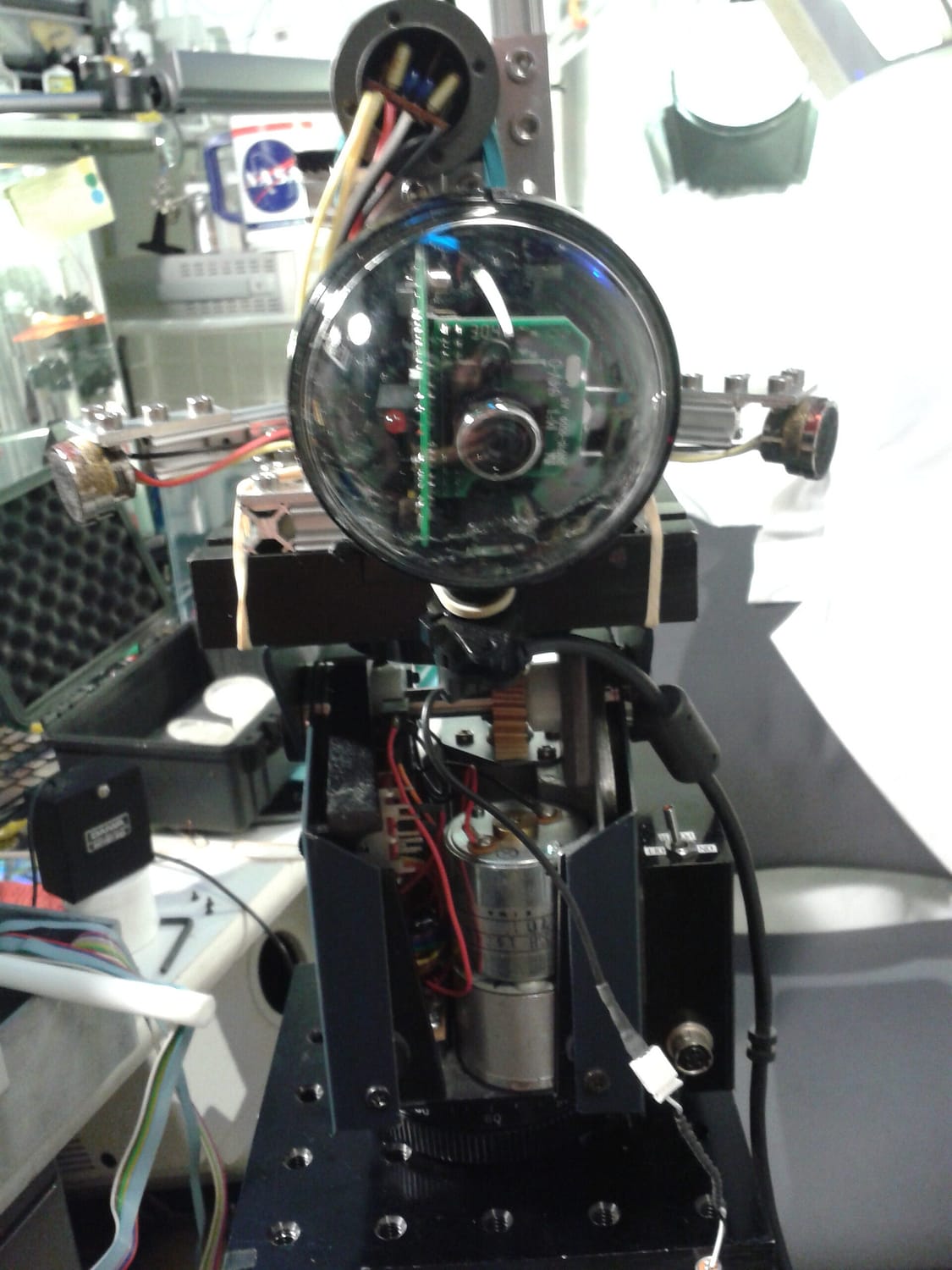

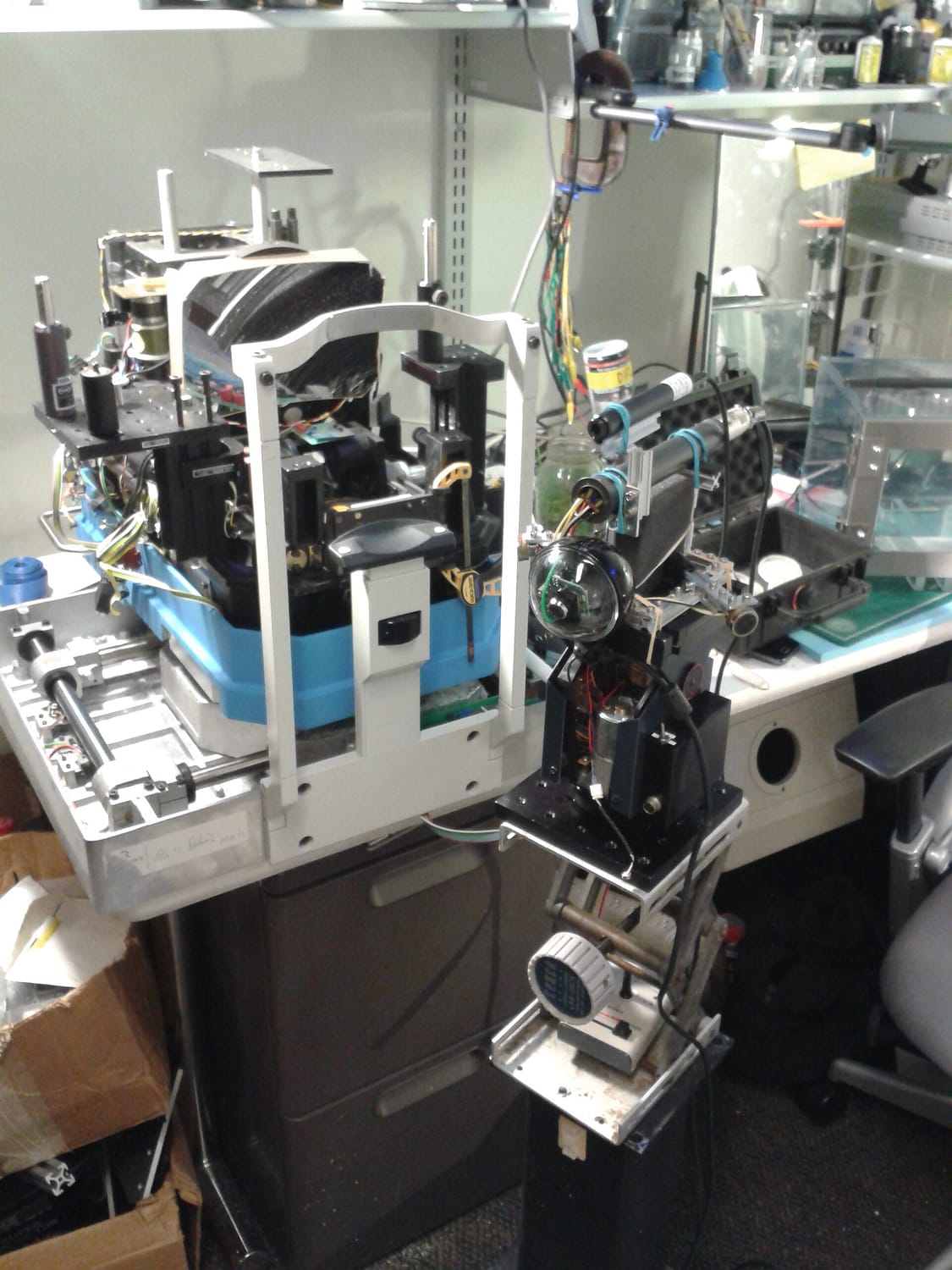

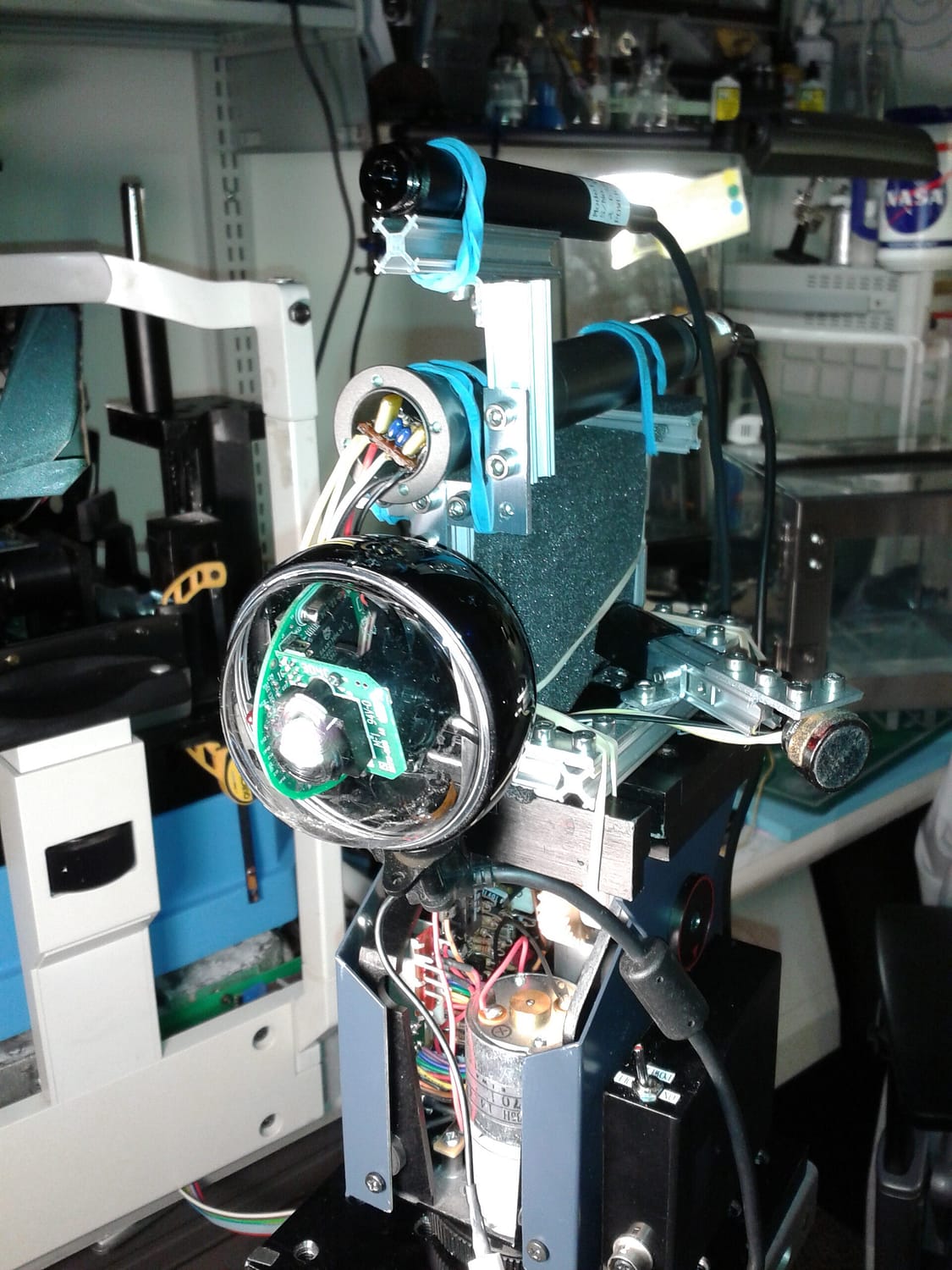

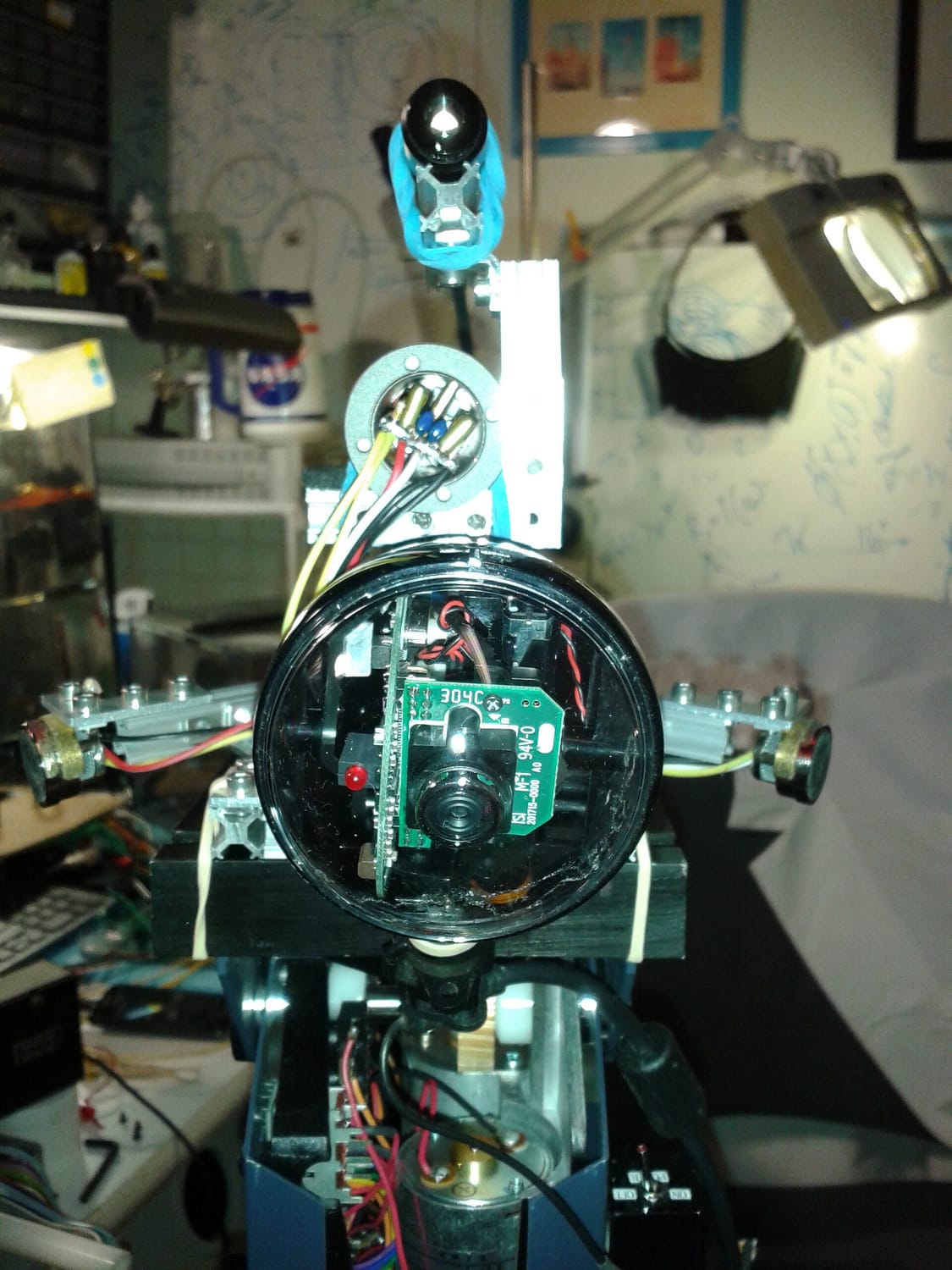

Biomimetic systems architecture engineering: prototypes

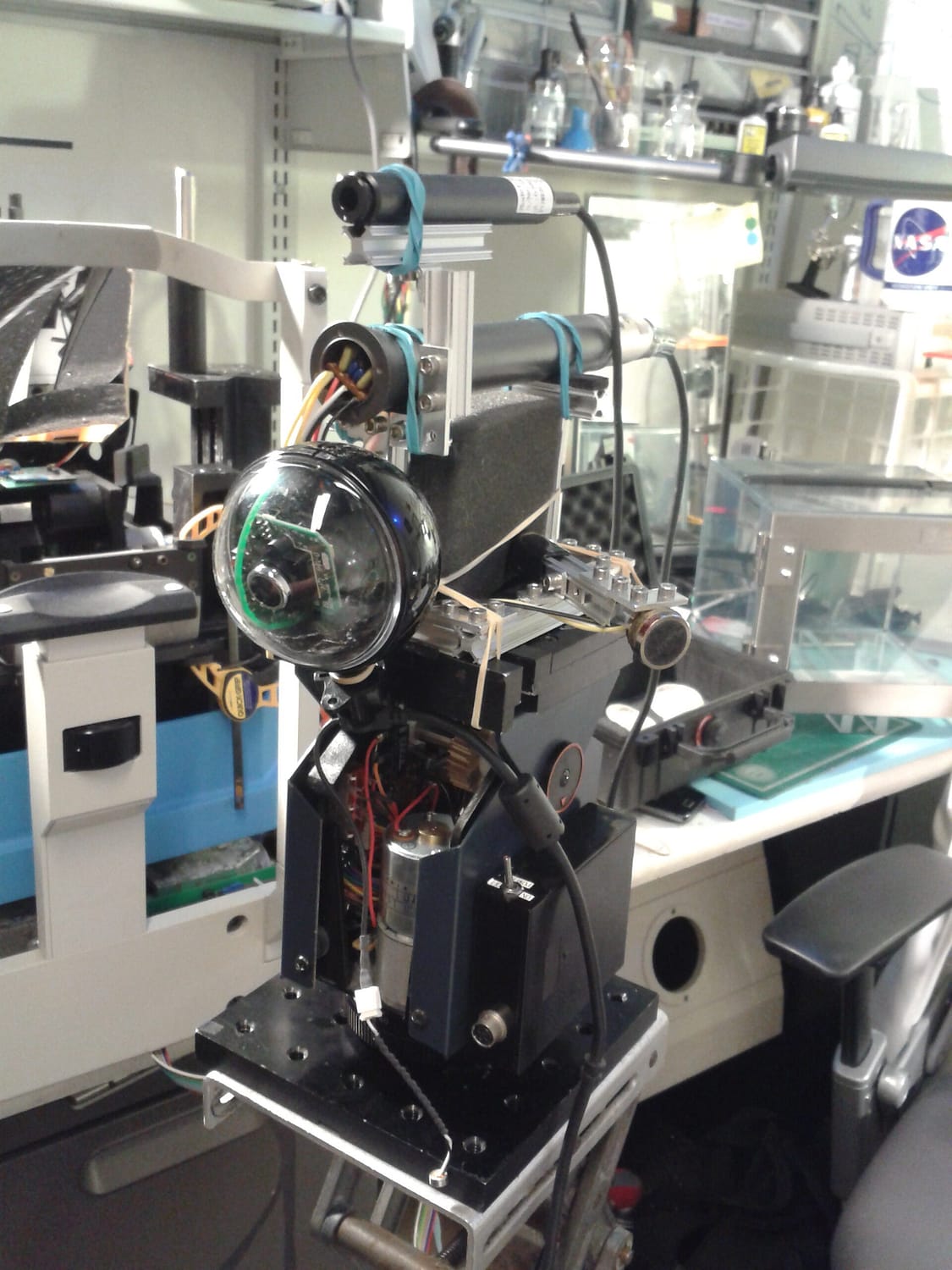

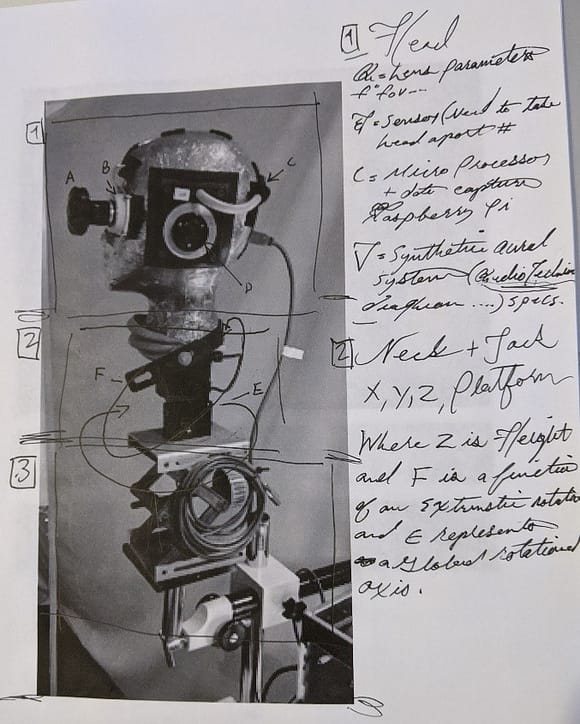

Quantizing visual perception as an expression of experience is referentially viewed as a modular function, temporally sequenced within multiple sensory inputs that operate largely as separate and independent channels in the first cascade of interaction. Throughout the course of my dissertation, I dedicated a year towards the development, engineering, and articulation of humanoid robots with biomimetic sensory capabilities to evaluate multi-sensory chordance between sight, sound, and environment. These robotic systems were specifically built to function in the field for real-time data capture toward developing new cybernetic interventions for augmenting human sensory experience.

Multisensory integration has been one of the least studied areas of research in perception in real world environments. Many important questions remain to be answered. The results from the above studies function as a platform to question the establishment of new application and viable avenues for future research in dynamic scene integration.

This 3 DOF system is a functional platform housing cyclopean vision coupled with biomimetic scaffolding supporting binaural channels. These synthetic otolith mechanisms are a first step in integrating biological architectures toward a more dynamic understanding of multisensory chordance in complex environments.

Demonstrations:

Please log in to view protected content: