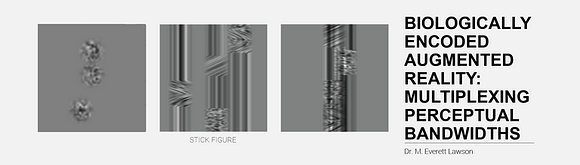

This is a stick figure. The world we perceive around us is an incomplete image, of not only what we’ve built, but the inherent potentials we have yet to unlock in our biology. We are surrounded by displays. We move through time in confined cockpits. Our realities are defined by what we are capable of perceiving. The way in which we deliver information to the senses will define how we build the future.

Abstract:

Information floods the center of our visual field and often saturates the focus of our attention, yet there are parallel channels constantly and unconsciously processing our environment. Using sensory plasticity as a medium to create new visual experiences allows us to challenge how we perceive extant realities. This dissertation describes a novel system and methodology for generating a new visual language targeting the functional biology of far peripheral mechanisms. Instead of relegating peripheral vision to the role of redirecting attentional awareness, the systems described here leverage unique faculties of far peripheral visual processing to deliver complex, semantic information beyond 50° eccentricity without redirecting gaze.

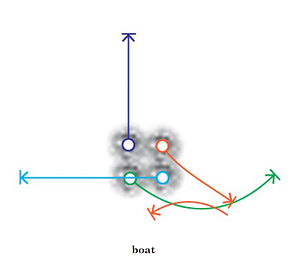

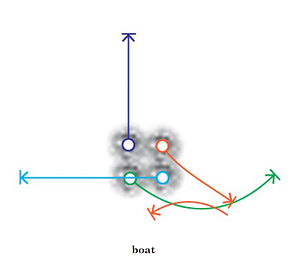

Synthetic position shifts can be elicited when frequency gratings or random dot patterns are translated behind a static aperture and viewed peripherally, a phenomenon called motion-induced position shift (MIPS). By transforming complex symbols into a series of strokes articulated through MIPS apertures, I present a codex of motion-modulated far-peripheral stimuli. Methodologies describe a two-stage implementation: first, proven psychophysical constructs are integrated into contextual forms, or codex blocks, and second, adapted to an environment of complex scenes. This approach expands upon prior work not only in first principles of visual information composition and delivery but also in its capacity to convey highly abstract forms to the far periphery with no gaze diversion, via apertures spanning only 0.64 degrees of the visual field.

Spatial compression and far peripheral delivery of complex information have immediate applications in constrained display environments for interaction, navigation, and new models of visual learning. As the technological cutting edge outpaces our physiological sensitivities, the proposed methodologies could facilitate a new approach to media generation utilizing peripheral vision as a compression algorithm, redirecting computation from external hardware to direct correlations within our biology.

Systematic and applied longitudinal studies were conducted to evaluate the codex in increasingly complex dynamic visual environments. Despite increasing scene complexity, high detection accuracy rates were achieved quickly across observers and maintained throughout the varied environments. Trends in symbol detection speed over successive trials demonstrate early learning adoption of a new visual language, supporting the framework and methods for delivering semantic information to far peripheral regions of the human retina as valuable extensions of contemporary methodologies.

High Level Excerpts

Excerpts: peripheral semantics (codex overview)

This research demonstrates a foundational approach to peripheral semantic information delivery capable of conveying highly complex symbols well beyond the established mean, using motion-modulated stimuli within a series of small, static apertures in far periphery ( > 50°).

Excerpts: stimulus preparation

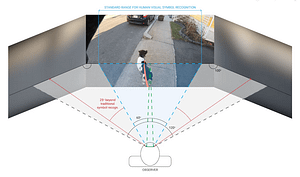

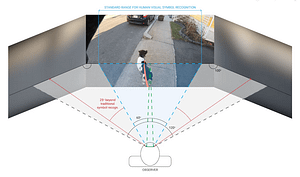

Adapting stimuli to dynamic environments One of the greatest challenges of adapting motion-modulated semantics to real-world environments is maintaining requisite conditions for effects to occur.

Outfitting real-time cockpit environment with adaptive perceptual skins

Information floods the center of our visual field and often saturates the focus of our attention, yet there are parallel channels in the visual system

Excerpts: codex metrics, results in controlled environments

Metrics of symbol recognition speed and accuracy in a controlled environment, with codex parameterization using motion energy analysis

Excerpts: increasing environmental complexity, human subject trials

How detectable are far peripheral semantic cues in increasingly dynamic environments? Results show rapid adaptation and high detection accuracy.

Excerpts: peripheral semantics (codex overview)

This research demonstrates a foundational approach to peripheral semantic information delivery capable of conveying highly complex symbols well beyond the established mean, using motion-modulated stimuli within a series of small, static apertures in far periphery ( > 50°).

Excerpts: stimulus preparation

Adapting stimuli to dynamic environments One of the greatest challenges of adapting motion-modulated semantics to real-world environments is maintaining requisite conditions for effects to occur.

Outfitting real-time cockpit environment with adaptive perceptual skins

Information floods the center of our visual field and often saturates the focus of our attention, yet there are parallel channels in the visual system

Excerpts: codex metrics, results in controlled environments

Metrics of symbol recognition speed and accuracy in a controlled environment, with codex parameterization using motion energy analysis

Excerpts: increasing environmental complexity, human subject trials

How detectable are far peripheral semantic cues in increasingly dynamic environments? Results show rapid adaptation and high detection accuracy.